Chris over at Pixelsumo just mailed me some more links to do with the background and technology behind the Microsoft Surface table. One is from Ars Technica and explores the technology more (much of which is available in the press download from Microsoft). The other from Popular Mechanics has some more demos of other systems, including Jeff Han’s who seems to be the poster boy for multi-touch at present (along with the iPhone).

There are also another couple from Abstract Machine and Fast Company too. The Abstract Machine one by Douglas Edric Stanley is great to put all this newness in perspective (that actually it’s all pretty old, it’s just hit the mainstream now. Almost).

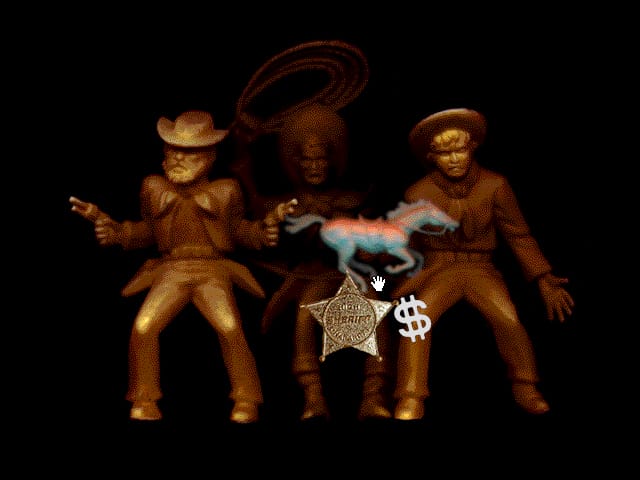

The video of Jeff Han also has an interview with him and he talks about how the mouse is an ‘indirect pointing device’ that is one step removed from the content. This is something we talked about a lot at Antirom. At the time (and still, in much interactive content) there was a preponderance of interfaces that had buttons with labels like “Click here to view the video”. You clicked a real button on the mouse to make the mouse pointer click a fake button on the screen to make the video play, when actually you could just click on the video and/or move the mouse around to change the speed, etc. The image below is of an audio mixer, for example. You just drag the images which have sounds ‘attached’ to them (so when your mouse is closer to each one, it’s louder and the image is less blurred) rather than using a fake 3d mixing desk.

Much of our experimentation and invention – that led to thinks like the scrolling engine (Shockwave requried) – were based upon trying to strip back as many layers of interface as possible. In the end we wanted to directly manipulate the content so that the content was the interface and quite often the interactivity was the content. I’m looking forward to the first time I get to have a go on one of these multi-touch interfaces to see whether you really do have that experience.

Thanks Chris!

Andy Polaine

Andy Polaine